Medieval Muslims had very practical reasons for their interest in mathematics.

The calculation of the precise direction of prayer toward Mecca, for instance, something required for the proper orientation of mosques, relied upon relatively sophisticated mathematical operations. So did the determination of the exact date when the holy fasting month of Ramadan commenced, since, being a unit of time in a lunar calendar, Ramadan moves through the seasons.

The exact reckoning of inheritance according to detailed Quran-based rules also needed attention. And even in premodern times, the “hajj,” the annual pilgrimage to Mecca that constitutes one of the five “pillars” of the Islamic faith, saw tens or even hundreds of thousands of Muslims converging upon the holy cities of Arabia from all corners of the globe. Coming by land and by sea across vast distances, they required navigational skills of a high order.

But the Muslims of Islam’s classical age didn’t limit their mathematical investigations to merely practical matters. They made significant theoretical contributions, for example, to trigonometry and spherical geometry.

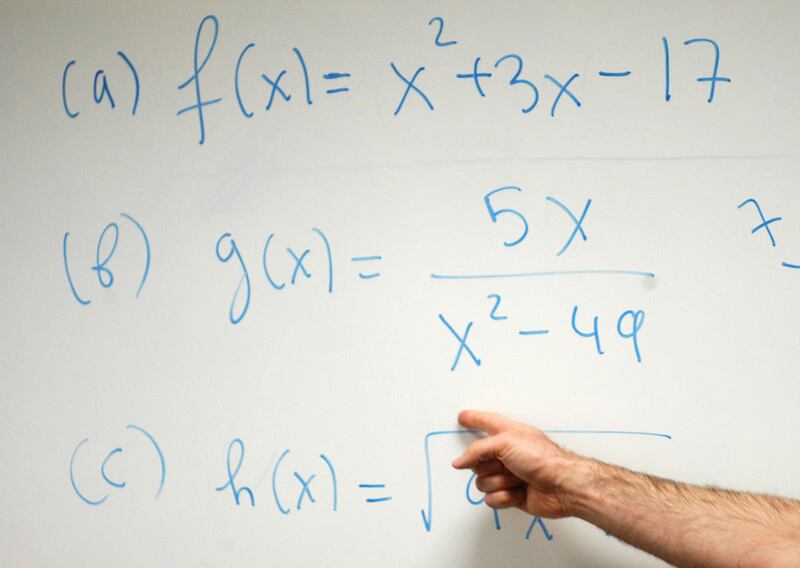

Moreover, it was an Arabic-writing mathematician, al-Khwarizmi (d. A.D. 850), who invented the technique of using letters and other non-numerical symbols to represent numerical values.

We call this technique “algebra,” using a word derived from the title of one of al-Khwarizmi’s books. (He employed the Arabic term “al-jabr,” which originally referred to “joining” or “setting” broken bones, to refer to the “joining” of mathematical quantities together, as in an equation.)

And, in fact, al-Khwarizmi’s name itself shows up in Western mathematics, albeit in distorted form, as “algorithm,” which denotes a system of rules or a process for calculations.

It was also from al-Khwarizmi that the West learned of Arabic numerals. Three centuries after his death, his mathematical works were translated by an Englishman named Abelard of Bath (d. ca. A.D. 1152). But at least two more centuries had to pass even after Abelard before Arabic numerals were generally accepted by Europeans.

Scholars in the West had found the Arabs’ concept of “zero” especially silly. It was, they said a “meaningless nothing” — and why bother with a symbol for nothing?

Eventually, though, European mathematicians and others discovered that Arabic numerals, and the place system that came along with them, had enormous advantages over Roman numerals. (See for yourself: As an experiment, try to do long division or multiplication with “MDCCCXLVIII” and “CDXIV,” say, rather than with “1848” and “414.”) Today, our English words “cipher” and “decipher,” and even the word “zero” itself, come from the Arabic term for “emptiness” or “nothingness,” “sifr.”

Gradually, as the West noticed the riches that were available in Arabic scientific and mathematical writings, the pace of translation from Arabic into Latin picked up.

“From the second half of the eighth to the end of the 11th century,” writes the great Harvard historian of science George Sarton, “Arabic was the scientific, the progressive language of mankind. … When the West was sufficiently mature to feel the need of deeper knowledge, it turned its attention, first of all, not to Greek sources, but to the Arabic ones.”

A final example of such influence can be found in a simple illustration:

We commonly use the letter “x” to represent an unknown quantity. Originally, this was merely the practice in mathematics, but it eventually entered into everyday life, where we speak of things like “Brand X.”

But how did we come to choose the letter “x” for this? Why not some other letter? The answer is that the practice comes, in a roundabout way, from Arabic.

Older Spanish and Portuguese spelling used the letter “x” to represent what English-speakers know as the “sh” sound. And it so happens that the Arabic equivalent of the English word “thing” or “something” is “shay.” Thus, when the Spanish and Portuguese translators in Toledo who transmitted Arabic mathematics to the West wanted an easy-to-write symbol to represent an unknown quantity, they simply used the Roman alphabet’s equivalent of the first letter of the Arabic word for “something.”

“In the sciences,” the German scholar Enno Littmann wrote in 1924, “namely in medicine, mathematics and the natural sciences, the Arabs (or, at least, the people who had adopted the Arabic language) were the master teachers of medieval Europe.”

And the importance of these borrowings from the Islamic Middle East (and many others that space prevents us from discussing) cannot be overstated. Without them, modern science and technology would be altogether impossible.

Daniel Peterson founded BYU's Middle Eastern Texts Initiative, chairs The Interpreter Foundation and blogs on Patheos. William Hamblin is the author of several books on premodern history. They speak only for themselves.