SALT LAKE CITY — It might seem harmless. Convenient, even. It’s the technology you might use to open up your phone, or to help you tag pictures on social media.

It’s called facial recognition — technology used to identify people. And it’s on a trajectory to become ubiquitous — used in security systems for crowded places, such as malls, airports and schools.

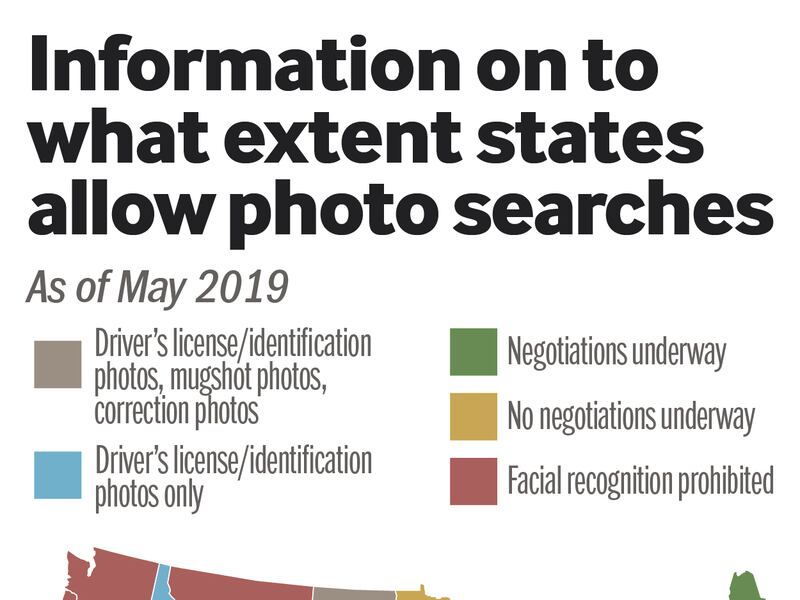

It’s already being used by the FBI, as well as state and local law enforcement agencies across the country, to help police find suspects accused of everything from shoplifting to terrorism.

But for all of its benefits, the technology is not without its flaws. Researchers have raised concerns about the technology’s accuracy, as well as its serious implications for privacy, free speech and racial and gender bias.

Such concerns aren’t merely theoretical. There have already been mistakes leading to the arrest of innocent people because of the technology.

In July 2018, the American Civil Liberties Union tested Amazon’s facial recognition system by scanning the faces of all 535 members of Congress against 25,000 public mug shots. The software incorrectly matched 28 members of Congress — disproportionately people of color — identifying them as other people arrested for a crime.

Last month, the University of Colorado took heat for a federally funded project that tested face recognition technology by using cameras to identify 1,700 passersby on campus without their knowledge or consent.

Such events have prompted an outcry from both the private and public sector. Last year, Microsoft asked Congress to start regulating the technology. Amazon shareholders are pressuring the company to stop selling facial recognition technology to the government. In May, San Francisco became the first major city to ban the use of facial recognition technology by police and other local agencies.

And now, such concerns have reached the ears of Congress. In two hearings, the most recent of which took place on June 4, the House Committee on Oversight and Reform took a closer look at how the government is using facial recognition technology.

Their take? Fix it, or stop using it.

What experts are concerned about

Critics question the effectiveness of law enforcement using a software known for problems with accuracy — especially in detecting facial differences between women, young people and people of color.

A 2018 MIT study found that when the person in the photo is a white man, face recognition software is right 99 percent of the time, but the darker the skin, the more errors arise — up to nearly 35 percent for images of darker-skinned women.

Experts also believe the technology has serious implications for privacy and free speech.

The impact this has on First Amendment activity are incredibly troubling and should not be understated. – Clare Garvie, an associate with the Center on Privacy and Technology at Georgetown Law

While the government regulates other police investigative tools, such as wiretaps and fingerprints, there is a "complete absence of law" governing the use of face recognition by police, Clare Garvie, an associate with the Center on Privacy and Technology at Georgetown Law, told the Deseret News in September 2018.

The technology could have a “chilling effect” on protests, as people may be less likely to march along the streets if they believe cameras are tracking their whereabouts.

“The impact this has on First Amendment activity are incredibly troubling and should not be understated,” she said.

The concerns about privacy become more troubling when discussing the potential for the technology to be used in police body cameras, Matt Cagle, technology and civil liberties attorney at the ACLU of Northern California, told the Deseret News in September.

He says body cameras were originally intended to be a way to keep officers accountable to the public for their actions, but face recognition transforms their use into a tool to track and target members of the public.

"Face surveillance should never be used with body cameras. It turns a tool for government accountability into a tool for government spying," says Cagle.

What Congress is saying

At their first hearing on the matter in May, entitled "Facial Recognition Technology: Its Impact on our Civil Rights and Liberties," lawmakers examined the need for oversight on the use of the technology on civilians by government and commercial entities, and highlighted the need for regulation as a bipartisan concern.

"You've now hit the sweet spot that brings progressives and conservatives together," Rep. Mark Meadows, R-N.C., said during the hearing. "The time is now before it gets out of control."

Rep. Alexandria Ocasio-Cortez, D-N.Y., said that facial recognition threatens American values.

"It's extraordinarily encouraging that this is a strong, bipartisan issue," she said. "This is about who we are as Americans, and the America that is going to be established as technology plays an increasingly large role in our societal infrastructure."

At the second hearing in early June, entitled, "Facial Recognition Technology: Ensuring Transparency in Government Use," lawmakers focused on how specific government agencies, including the FBI and the Transportation Security Administration, have been utilizing the technology.

The FBI took heat for failing to meet the Government Accountability Office’s recommendations on privacy, accuracy and transparency.

This is about who we are as Americans, and the America that is going to be established as technology plays an increasingly large role in our societal infrastructure. – Rep. Alexandria Ocasio-Cortez, D-N.Y.

In 2016, when researchers first published numbers on how many photos were included in FBI facial recognition databases, they said the bureau had access to 117 million Americans. Since then, the number has jumped to 641 million, according to the Government Accountability Office.

"They still haven't fixed the five things they were supposed to do when they started," Rep. Jim Jordan, R-Ohio, said at the hearing. "But we're supposed to believe 'don't worry, everything's just fine.'"

Those “five things” refer to a May 2016 Government Accountability Office report that found “the FBI hadn't fully adhered to privacy laws and policies or done enough to ensure accuracy of its face recognition capabilities.” In testimony at the hearing, the office stated that out of the six recommendations prescribed back in 2016, only one had been fully addressed.

The five remaining recommendations include publishing privacy documents, conducting annual reviews of accuracy, improving sample sizes in accuracy tests, testing accuracy of partners and conducting privacy impact assessments.

Lawmakers also raised concerns about the TSA’s use of the technology.

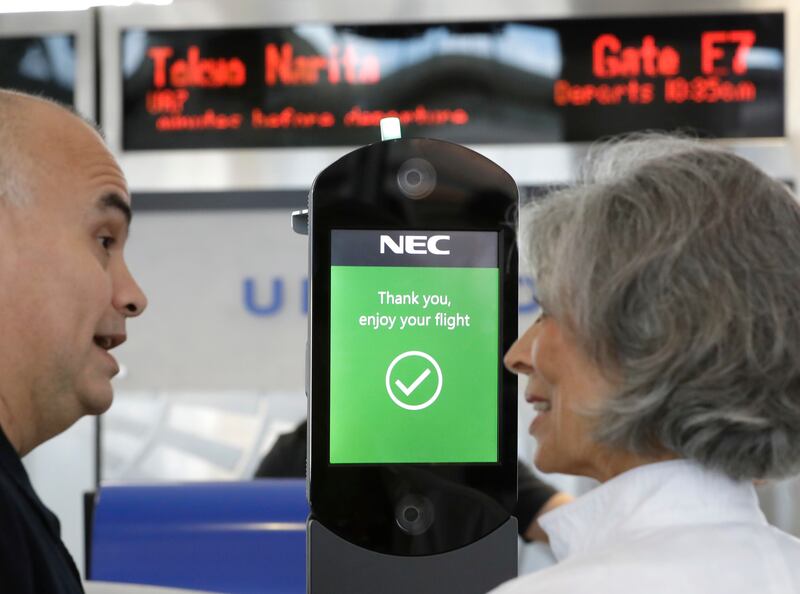

Austin Gold, the TSA’s assistant administrator on requirements and capabilities analysis, testified that the agency's use of facial recognition at airports is helpful in speeding up the check-in process.

"The ability to increase throughput while providing more-effective passenger identification will be extremely beneficial as we continue to see increasing passenger volumes, which are growing at a rate of approximately 4 percent annually," Gould said.

The Department of Homeland Security is in the midst of setting up facial recognition systems that will scan all international passengers, including U.S. citizens, in the top 20 U.S. airports by 2021, according to BuzzFeed News. Face recognition could be used in airports for everything from check-in, baggage drop, security checkpoints, lounge access, boarding and other processes.

But critics have raised questions about privacy and lack of regulatory oversight on this program. There are “no limits” on how airlines could use facial recognition data collected by the U.S. Customs and Border Protection, according to the BuzzFeed investigation.

“I think it’s important to note what the use of facial recognition (in airports) means for American citizens,” Jeramie Scott, director of EPIC’s Domestic Surveillance Project, told BuzzFeed News. “It means the government, without consulting the public, a requirement by Congress, or consent from any individual, is using facial recognition to create a digital ID of millions of Americans.”

Meadows criticized the program, stating that TSA has many issues now without adding facial recognition to the mix.

"Until you get that right, I would suggest that you put this pilot program on hold," he said.

Oversight Committee Chairman Elijah Cummings, D-Maryland, said he would reconvene witnesses in the next two months to see if improvements have been implemented.

"American citizens are being placed in jeopardy as a result of a system that is not ready for prime time," Cummings said.