The search for pleasure on the internet can easily reveal its underside, the search for relief from emotional pain. While looking for love drives some to online dating sites, loneliness and alienation drive others to seek help in therapy. Web programs have grown up recently around the treatment of psychological conditions like depression. Yet the therapeutic relation may be even more difficult to commodify or take online than the search for companionship.

Today, cases of depression have ballooned almost 20% in a decade, the World Health Organization reports, making it “the leading cause of disability worldwide.” The number of people globally living with depression, according to a revised definition, had reached 322 million in 2015, up 18.4% since 2005.

Meanwhile, the labor of treating affective conditions, a burden that traditionally may have fallen to a parish priest or similar spiritual figure, is becoming expensive. The years of training of psychologists in a “talking cure” make the hourly rate prohibitive when billed en masse to the public health system.

The treatment for depression, if it is not medicated with antidepressants, is currently cognitive behavior therapy, or CBT. CBT is a regime like pharmacy but without drugs. It mimics pharmacological action by taking an approach to mood that minimizes it as a kind of thought, instead imagining it more on the model of artificial intelligence in need of “reprogramming.”

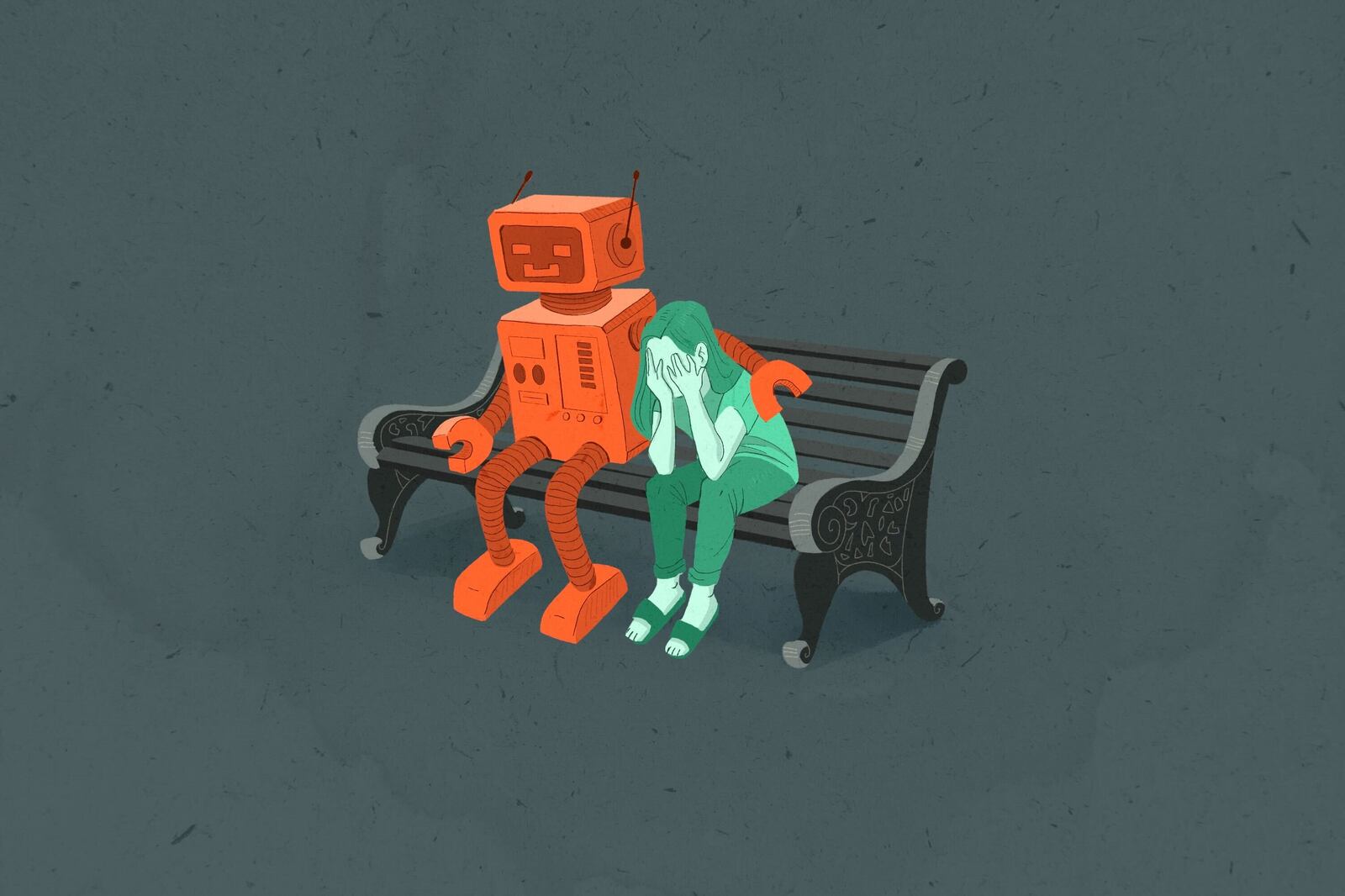

And artificial intelligence, now advanced enough to respond appropriately to the concerns of patients, is being used to reduce the physical burden of depression remotely. While the technology presents opportunities to see a “therapist” with the click of a mouse, the interaction may do more harm than good to those seeking genuine healing.

Enter “Moodzone,” a webpage on the United Kingdom’s National Health Service site that advocates the use of “self-help therapies.”

These are, it explains “psychological therapies that you can do in your own time to help with problems like stress, anxiety and depression. ... They can be a useful way to try out a therapy like cognitive behavioral therapy to see if it’s for you.”

The site adds that these therapies can also be convenient if “you’re short of time, you have family or work commitments, you can’t get out easily, you want a therapy that’s completely anonymous” and so on.

While the technology presents opportunities to see a “therapist” with the click of a mouse, the interaction may do more harm than good to those seeking genuine healing.

Online treatments for depression like Moodzone, however, raise the question of whether there is something manipulative in being drawn to confide online to a program that is simulating empathy and understanding. We might ask whether empathic responses are just a series of signs strung together like any other text, or whether there is something above and beyond the form of words being given and received in “real human interaction,” and specifically in psychotherapy.

Behavioral psychology describes its subjects on the model of a kind of “bot”; it imagines human beings as a string of code that results in apparent action. The opposition at stake is that between authentic and inauthentic, and whether the Turing Test, a test to see whether a computer is capable of thought, reduces the whole population of “other minds” to a believable simulation. The reason cognitive behavior therapy suits the Turing Test is that it comes from the same model of other minds; that is, the idea that the other is a black box, opaque to me and known only from its observable gestures.

Any chatterbot can satisfy the test — the question this raises is where does it leave the human interlocutor? Whether he or she is being deceived about the responses being human, or the simulation is known to be supplied in lieu of human interaction, can a person really be said to be receiving the “same” gratification as from ordinary conversation?

If it makes no difference whether the confidante is really listening or is just a simulation of listening, then perhaps it would be possible that the need for care and empathy could be met online by a bot.

But this seems intuitively implausible.

People report feeling deceived when on dating websites correspondents are persuaded to pay over their savings to a phantom lover who turns out to be a Nigerian syndicate, or when in chatrooms they find that kids are being groomed for sexual abuse by pedophiles posing as peers. In some circumstances, offering to meet emotional need by simulation is more than artificial — it is deceptive.

As a trained psychoanalyst, Sheery Turkle, author of “Alone Together,” prizes authenticity which she describes as following from “the ability to put oneself in the place of another, to relate to the other because of a shared store of human experiences: we are born, have families, and know loss and the reality of death. A robot, however sophisticated, is patently out of this loop.”

But judging authenticity by shared content might also miss a richer engagement that intimacy implies. Beyond content, there is a synchrony in intimacy that arises from colocation, a pleasure in being together by whatever means this takes place.

“Sociable robots serve as both symptom and dream: as a symptom, they promise a way to sidestep conflicts about intimacy; as a dream, they express a wish for relationships with limits, a way to be both together and alone.” Turkle’s discussion of online companionship suggests there is an anxiety about intimacy experienced currently that might be drawing people to the potentials of technology, offering “ways to be in relationships and protect ourselves from them at the same time.”

She looks at the question of intimacy in relation to our “devices.” “Digital connections and the social robot may offer the illusion of companionship without the demands of friendship. Our networked life allows us to hide from each other, even as we are tethered to each other.”

But these factors pulling people in are not perhaps based on the appraisal of what is on offer from technology. “We don’t seem to care what these artificial intelligences ‘know’ or ‘understand’ of the human moments we might ‘share’ with them. At the robotic moment, the performance of connection seems connection enough.”

And she explains this in terms of “Darwinian buttons,” evolutionary traits that might cause us to confuse the robotic response with the one we seek. The construction of sociable robots — both physical care bots and algorithms — make use of our anthropomorphism, “how we see robots as close to human if they do such things as make eye contact, track our motion, and gesture in a show of friendship.” Because we are psychologically prone not only to nurture what we love but to love what we nurture, then even “simple artificial creatures” can provoke heartfelt attachment.

While people in Turkle’s survey compare pets with therapeutic robots that keep them company, the comparison is not “on all fours.” The dog and cat (and other mammals) have a sympathetic nervous system like ours, that links the animal’s survival to a system of emotional responses that steer it holistically through its environment.

It is not just that the dog, like the child, learns by accumulating myriad layers of call-and-response — this much they may have in common with a self-learning robot. But it is that this call-and-response works as a bloc of feelings that coheres in a felt reality. It marks the affinity that pets offer. While our dogs may not understand why we are sad, they clearly can recognize the affect because they have their own version of it. (This is Turkle’s “shared experience”).

We might ask whether empathic responses are just a series of signs strung together like any other text, or whether there is something above and beyond the form of words being given and received in “real human interaction,” and specifically in psychotherapy.

More to the point, in having this, the dog detects emotion in us as the way in which she has entered into sociality with her own pack since well before she was our “best friend.” The dog can be a friend precisely because she uses the communion of an emotional milieu as we do.

In a way, we are better able to conceive of the lack of synchrony in a robot when we think of how other people can disappoint us. If I reach out to a friend, but she is too busy to respond, then I may feel disappointed. I was refused the feeling that I customarily have when we “meet.” The way this pleasurable process of friendship can fail, or can be interrupted, shows us what is at stake in the virtue of authenticity.

And in the inauthentic interaction — when, say, the call-center voice starts out friendly because it wants to solicit a donation — we have our clue to the shortcomings of simulation. It is not only that we want to be greeted with friendship “for ourselves” and not for the instrumental purpose that we can supply. We want to have that milieu proposed to us by the other person as an end in itself. That the intersubjective is a milieu of pleasure is at the nub of why other creatures who don’t “do” life as feeling cannot produce that synchrony for us. And that even the most difficult, selfish and resistant human beings present us with the reality that there is such a milieu where human meeting happens (in their very act of withholding it).

This is why it seems likely that robot-human communion is something like a category error (trying to get “blood from a stone”). But the simulation of comfort is fascinating from the other direction, for what it draws from the psychotic mental state. Hearing voices, seeing visions, imagining threats — these distressing disturbances of real perception are a product of the psychical capacity called “projection” which is the conduit of our cognitive as well as our emotional lives. To the extent artificial intelligence (and more generally the commodity) gratifies our need instrumentally, it does so through a one-way mirror that we ourselves hold up.

It is in private domains, in the intimate arrangements of love, that the frauds of freedom are most keenly felt. The connection between self and others threatens to become thoroughly transactional. We need to go via Turing’s black box to understand what it is that we want from others, when we desire and when we say we care.

The red herring of the chatbot disguises the incurable pain of living in an instrumental world. What can be done to remedy it, when bodily need is separated from psychical satisfaction and treated as something commodified?

Between psychology and sociology we dwell as hapless “desiring subjects.” Now we are morphing, under the pressures of the technological and the digital, remaking our most personal adventures in intimacy to mean something new.

This essay is a modified excerpt from Robyn Ferrell’s “Philosophical Essays on Free Stuff” published by Rowman & Littlefield. Ferrell is an adjunct professor at the Centre for Law, Art and Humanities at the Australian National University and in gender & cultural studies at the University of Sydney. She is the author of several books of philosophy and a book of creative non-fiction, “The Real Desire”, which was shortlisted for the NSW Premiers Award in 2005.