The U.S. Federal Trade Commission is investigating the operations of ChatGPT creator OpenAI, citing concerns over how the company harvests data on consumers and whether or not false information on individuals has been shared through the inquiry-driven platform.

The FTC investigation was first reported Thursday by The Washington Post, which also shared a leaked document outlining details of the probe.

ChatGPT answers questions and can produce prompt-driven responses like poems, stories, research papers and other content that typically read very much as if created by a human, although the platform’s output is notoriously rife with errors.

Emerging AI tools have raised wide-ranging questions and concerns about personal data privacy, job replacement and even the potential of creating an existential threat at the same level as a potential global nuclear war or unforeseen biological disaster.

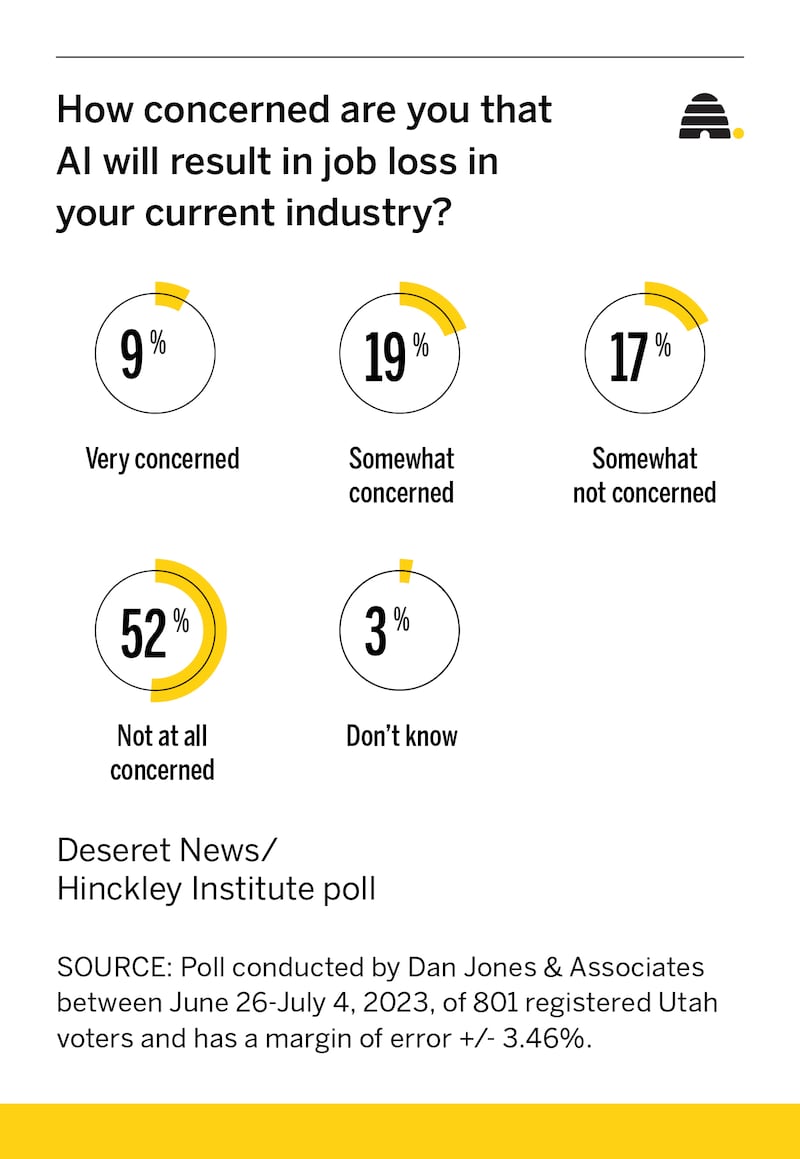

In a new statewide Deseret News/Hinckley Institute of Politics poll, Utahns shared their own level of worry about potential job losses and last month weighed in on overall concerns with new artificial intelligence-driven platforms like ChatGPT, DALL-E, Google’s Bard and others.

OpenAI co-founder and CEO Sam Altman tweeted a response Thursday after news of the FTC investigation became public, registering his chagrin that the investigation was revealed via a leaked document but pledging to cooperate with the FTC investigators.

“It’s very disappointing to see the FTC’s request start with a leak and does not help build trust,” Altman tweeted. “That said, it’s super important to us that out (sic) technology is safe and pro-consumer, and we are confident we follow the law. Of course we will work with the FTC.”

Altman has become something of a de facto figurehead when it comes to generative AI tools thanks to the enormous response to the inquiry-based ChatGPT platform that went public last November and has since attracted over 100 million users. In May, he testified before a U.S. Senate committee hearing as federal lawmakers scramble to construct new regulatory oversight aiming to keep AI tools in check.

Altman readily agreed with members of the U.S. Senate Judiciary Subcommittee on Privacy, Technology and the Law that new regulatory frameworks were in order as AI tools in development by his company and others continue to take evolutionary leaps and bounds. He also warned that AI has the potential, as it continues to advance, to cause widespread harm.

“My worst fears are that we, the field of technology industry, cause significant harm to the world,” Altman said. “I think that can happen in a lot of different ways. I think if this technology goes wrong, it can go quite wrong and we want to be vocal about that.

“We want to work with the government to prevent that from happening, but we try to be very clear-eyed about what the downside case is and the work we have to do to mitigate that.”

The FTC’s 20-page civil subpoena includes requests for a wide range of information including how OpenAI trains its AI engine, where information comes from and how the harvesting of copyrighted and private personal information is handled, including how the company mitigates “false, misleading or disparaging” statements about individuals.

During the Senate hearing in May, lawmakers bemoaned their collective failure in taking timely action to protect the public from the harms associated with social media platforms before they became an issue and vowed to not make the same mistake when it comes to regulating emerging generative AI tools.

Sen. Richard Blumenthal, D-Conn., who chairs the U.S. Senate Judiciary Subcommittee on Privacy, Technology and the Law, noted the effort was the first in a planned series of hearings on oversight of artificial intelligence advancements and “intended to write the rules of AI.”

Blumenthal also shared his concerns about AI-driven tools advancing to the point where they began widely replacing human workers, a fear shared by Microsoft co-founder Bill Gates, who told CNBC in a May interview that a possible future scenario could include AI-powered humanoid robots becoming more affordable than human laborers and beginning to replace blue collar workers.

Utahns, however, don’t appear to be particularly worried about worker ‘bots taking over their jobs at the moment, according to results from a new Deseret News/Hinckley Institute of Politics poll.

The statewide survey found only 28% of respondents who are currently working are concerned about AI leading to job losses in their industries and of that group, only 9% said they were very concerned. A solid majority of poll participants, 69%, said they weren’t worried about artificial intelligence-driven tools replacing them at work, with 52% weighing in as not at all concerned.

The survey was conducted June 26-July 4 of 801 registered Utah voters by Dan Jones and Associates and has an overall margin of error of plus or minus 3.46%.

But, results from polling done in June by the Deseret News/Hinckley Institute of Politics reveals that while the majority of Utahns may not be concerned specifically with AI advancements leading to job loss, they are more broadly worried about the potential impacts of artificial intelligence advancements.

In the statewide survey conducted May 22-June 1, 69% of respondents said they were somewhat or very concerned about the increased use of artificial intelligence programming, while 28% said they were not very or not at all concerned about the advancements.

The same poll also included questions about AI regulation and which government entity, if any, should be tasked with overseeing the artificial intelligence sector.

Utahns appear to be of mixed sentiment when it comes to upping the ante on government regulation of AI tools. While a plurality of poll participants, 43%, said they’d like to see regulation increased, 19% said a decrease of AI regulation was in order and 26% said the status quo should be maintained.

And, when it comes to what level of government should be engaging in regulatory oversight of artificial intelligence advancements, a challenge reflected in the current hodgepodge of regulatory efforts by both state and federal lawmakers, a majority of poll participants, 53%, say the feds should be in charge. While 22% of respondents believe state government should oversee AI, 17% said government should not be involved in regulating tech companies working on artificial intelligence.